|

| Zora Neale Hurston |

For many sceptics, this titular phrase is one which engenders rolling eyes and huffs of exasperation, and this is a problem in itself. In fact, a swift google brings up two mainstream articles in the first results, one from Forbes in June 2020, the other on CNN as recently as September 2021, both of which are quite scathing of this utterance. The exasperation is well-motivated, in some respects, not least because what qualifies as research for many of those uttering this phrase is to google and find the first blog which seems to align with what they already believe, but there are pitfalls in this approach and, because of some historical events, it requires a degree of sensitivity. Here I want to address some of the history, some of the pitfalls, and some of the exasperation, but also to provide a way forward, by addressing the statement more constructively, as opposed to dismissing or disparaging it.

Let's start with the history, something of a tradition in these parts.

Distrust of medical science is, in some communities, especially in the US, a matter of historical contingency, which is to say it's heavily reliant on past experience, and well founded. To some of us, such a view may seem a bit off, but this is our privilege speaking.

Even today in some so-called developed nations, some demographics have measurably worse medical outcomes and, indeed, measurably different treatment by medicine. One metastudy showed, as recently as 2017, US healthcare workers exhibited the same implicit biases as in the wider population, leading to measurably worse outcomes[1]. A huge number of myths pervade the medical profession, a profession we'd expect to be above such bias by the simple expedient of being rooted in science, yet still those myths persist. The myth of black people's reduced sensitivity to pain, for example, is incredibly common, resulting in ineffective treatment recommendations[2]. Worse, black people who manifest pain are often seen as simply drug-seeking[3].

And this is when medicine is ostensibly doing its job properly. Black people in the US and their relationship to healthcare professionals has far worse than this in it to fuel distrust. One occurrence in particular quite rightly makes them wary, not least because of who it involved.

In recent months in the UK, there's been a lot of discussion about something known as the Nuremberg Code. When I say discussion, what I actually mean is a small but extremely loud contingent of antivaxxers making a lot of noise about it. The Nuremberg Code is not, contrary to the witterings of this particular contingent, any kind of law, or legally-binding framework, it's a guide for ethical best practice in experimentation involving humans. As the name suggests, it comes from the trials of Nazi scientists who'd conducted really atrocious experiments on Jews, Romani, Poles and disabled Germans, including children.

The US, however, has a similar atrocity which, while not on the same scale, comprehensively failed to live up to the standards set at Nuremberg. This was the famous Tuskegee experiment, in which 600 black Americans were told they were being treated for "bad blood" as part of a study to cure their ailments. In fact, the term "bad blood" was a mask for the real purpose of the study, the purpose of which was to see what would happen if syphilis - 399 of the men were infected - was allowed to run untreated. Beginning in 1932, this experiment ran for forty years, only being ended in 1972 when the truth was exposed. The result was 128 deaths, 28 directly from syphilis with the remainder dying of related complications, along with 40 of the men's spouses subsequently infected and 19 cases of congenital syphilis in their children. And who ran this study? The US Public Health Service in concert with the CDC.

This is not a trivial or unfounded fear, and the phrase "do your own research" is extremely well-motivated.

For my part, I actively encourage people to do their own research, but it's hugely important to understand what real research looks like. I'm not saying, of course, you have to go out and get a degree in some area of science; I have no such degree, nor any qualification of note. I am in every sense a layman, and I make no pretence at having any sort of formal scientific education, credential or authority. This blog is and has always been an attempt to bring what I've learned in several decades of just being interested in science, reading about it, and discussing it with those with real expertise. The authority and confidence with which I assert the following to be true (to a first approximation, of course; I get things wrong routinely, as I've always been at pains to express) is a function of having said silly things and been shot down by experts. This has given me some experience in researching science, and I feel sharing it may be of value.

One glaring problem with people doing their own research is this; understanding the relative merits of scientific research and scientific sources is far from being a trivial undertaking. It requires not huge intelligence but an enormous amount of care. I'm pretty much a total dunce (I didn't even finish school or attain any academic qualifications beyond Grade 3 trumpet), but I've learned the hard and painful way to apply simple principles - not always obvious - to assessing research. It's a skill; something to be learned, and it's mostly the skill of working out the trustworthiness of a source and, as with all things expertise, developing a grasp for the variables.

What is quite trivial is to find a study saying - or appearing to, at any rate - whatever you want it to say. All else aside, the way language is used in a scientific paper is not at all the same as the way we use it in the vernacular. This distinction between vernacular usage and 'terms of art' is enormous in science. For the most part, where scientific statements can't be expressed in purely mathematical terms, we have well-defined jargon (this is, in fact, exactly what jargon is for), but even jargon is distinct from terms of art, because terms of art are everyday, mundane terms whose usage in the vernacular can be quite loose, but whose usage in a rigorous field is always extremely narrowly-defined, precisely to avoid ambiguity.

An obvious example of this is the word 'theory'. In the vernacular, it's a guess at the underlying cause of something. It more closely resembles the scientific term 'hypothesis' in meaning but, even there, the synonymity isn't direct. In science, however, the word has a very precise meaning as an integrated explanatory framework encompassing all the facts, observations, hypotheses and laws pertaining to a specific area of study. These are very different, of course; an hypothesis or guess is the very bottom rung of the ladder in scientific terms, while a theory is at the very top. Even extremely mundane words like 'show' have very precise meanings, and it isn't always obvious which are the terms of art in a paper cast mostly in natural language.

That said, with a little care and practice, it's usually not incredibly difficult to tease out the conclusion of a paper, but the conclusion is only the beginning of the story. Even when you find a paper you're justifiably confident says what you think it says, there are other problems to be accounted for. The first and most important of these is experimental design.

Even unambiguous studies can be fraught. Just to pick one example not at all at random, there was a study uploaded to the MedRxiv pre-print* server in August by some Israeli researchers which compared acquired immunity (via infection) and conferred immunity (via vaccination) in breakthrough infections[4]. The study unambiguously assessed acquired immunity as offering superior protection. All well and good. There is a problem, though, and it's one of experimental design. Here's the problem laid out in the paper itself:

Lastly, although we controlled for age, sex, and region of residence, our results might be affected by differences between the groups in terms of health behaviors (such as social distancing and mask wearing), a possible confounder that was not assessed.

To somebody with knowledge or experience in experimental design, this is a huge red flag. It might not be immediately obvious why, but a simple analogy should suffice to expose it.

Imagine a study done in a school. Every day, every child in the school is given a meal containing precisely 1,000 calories. After careful analysis after a period of time, the study measures weight gain in all of the students, albeit by differing amounts from student to student. The study concludes 1,000 calories per day is too much.

Then you look at the methodology of the study and, in listing its limitations, it declares up front there was no control for the notion of children getting food from somewhere other than school. You'd have to treat the conclusion of the study with a good deal of circumspection, and you'd be remiss if you allowed the conclusion of this study to inform your dietary recommendations for schoolchildren. I'll be surprised if this paper passes peer-review, and it almost certainly won't without some serious revision, which is going to be extremely difficult given the nature of the study and the fact the confounder in this case is an incredibly difficult one to control for without it being a closed study, and entirely impossible for data already gathered.

These are the kinds of things you get very good at spotting with a little experience. Good experimental design is critical, especially when dealing with confounding variables**. In this instance, we're dealing with the most unpredictable confounding variable of them all; human behaviour. I won't belabour this point any further, as I have a large piece on statistics and probabilities quite close to completion, and I'll be covering this and other issues in considerable detail.

There are other things to note. One reasonable indicator of the quality of a study is the publication it appears in. This study was published on a pre-print server. This is pretty much the bottom rung. Actually, that's not even really true. The bottom rung is the vanity journal, but I'm going to let somebody else tell you about those shortly.

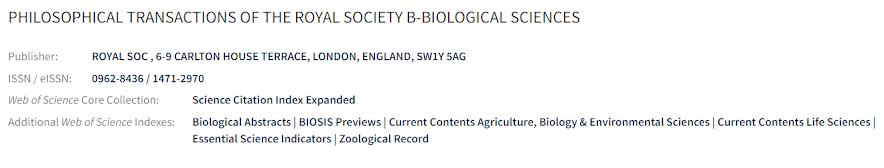

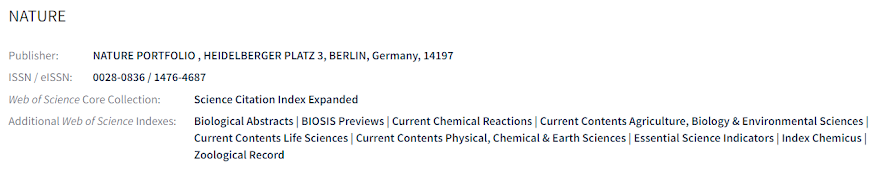

Scientific journals vary quite a bit in quality. There are indicators available to ascertain the quality of the journal. Some journals really needn't be examined too closely, such as Nature, or Philosophical Transactions of the Royal Society, the world's oldest scientific journal. Any peer-reviewed study published in these can be presented with a reasonable degree of confidence. For these and other journals, there are clues available.

The best place to start with a journal you don't recognise is the Master Journals List. You can search for the journal in question there. Here's the entry for Phil. Trans. B., the Royal Society's biological sciences journal.

And the entry for Nature.A pretty good rule of thumb is, if you can't find an entry for the journal in the Master Journals List, it's lacking something. Precisely what it's lacking - assuming it's not a vanity journal - is up next; impact.

The impact factor of a scientific journal† is easily the best metric of the quality of a journal. It was initially devised for librarians, so they could spend their meagre budgets on the most-read journals, because institutional access to scientific literature does not come cheap. It's come to be an important metric in its own right, but it does come with some important caveats, because there is some subjectivity involved. Once we see how the impact factor is derived, it becomes fairly obvious why. Impact factor is a number derived from citations, which we're going to look at in more detail next.

In particular, the impact factor of a journal is the result of a simple calculation, derived from the number of citations in a given year from papers published in the preceding two years, divided by the total number of citable items published in those two years. Of course, this is where the subjectivity comes in because, quite obviously, some scientific fields get more attention than others. What this means in practice is the impact factor of a journal can only reasonably be gauged against other journals publishing in the same field of research. As a clear example, research in oncology (cancer) is an enormous field, driven by need, so publications specialising in cancer are far and away the most read and cited. CA: A Cancer Journal for Clinicians, for example, has an impact factor of a whopping 223.679, while the The Coleopterists Bulletin has an impact factor of 0.697. So, while God may have an inordinate fondness for beetles††, scientists are considerably more interested in cancer.

In general terms, an impact factor of 1 is considered average, with anything lower below average. An impact factor of 3 is good, while an impact factor above 10 is excellent. For perspective, around 70% of journals have an impact factor greater than 1, while fewer than 2% have an impact factor greater than 10. Again, though, it's incredibly important to compare like with like. I've never read anything published in The Coleopterists Bulletin, but I'm sure it's an excellent journal, not least because coleopterists - and entomologists in general - tend to be quite diligent in my experience.

Now we come to the final complication in assessing the quality of a paper; citations.

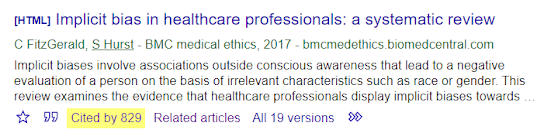

You can loosely think of the citations of a paper as being what other people are saying about it. If it has a lot of citations, we have a good indication it's an important paper. Most often, citations are used for support in the research of others. A really quick way to check for citations is simply to copy the title of the paper and do a quick search on Google Scholar. For example, looking at my citation [1], I pop it into Scholar and see how many times it's been cited. Here's the result.

As we can see, the paper has been cited 829 times in the four years since publication. If you click through the "cited by" link, you can find further citations of those papers, and so on. This is a pretty solid indicator a paper isn't just important but one whose conclusions are leaned upon by other research, and is a pretty useful guide to robustness.That's the peer-reviewed material, then, but what about pre-prints and other sources? Well, let's start with pre-prints, because these are of particular import at the moment.

As we've seen, a pre-print is a paper made available prior to any process of peer review. I won't delve too deeply into peer-review here, as we're already tending toward my usual failure of brevity, but it's exactly what the name suggests, namely review of your work by peers - other experts in your field. Of course, not all peers are created equal. See further reading links for more on this process.

In any event, pre-prints are often made available without review. This isn't a bad thing, of course, but the results have to be viewed with considerably less confidence than peer-reviewed results because, put simply, they haven't been checked. That said, there are still ways to get information. Most of the time, such as in the case of the Israeli study we've been discussing here, you can go straight to the pre-print server hosting the paper, where there is usually a comments section. Much of it is often blather, but there are sometimes good dissections pointing to problems. More importantly for our purposes here, though, there are also usually links to specialist pre-print discussion sites.

Most often, the "metrics" tab is the shortest page, so I tend to go there and see if any of these sites are discussing it, though it usually appear at the bottom of all tabs for the paper. In this case, we can see it's being discussed on PubPeer. Click on that link, and it takes us to a discussion of the paper by experts. In this case, we can see discussions from some really quite excellent scientists, such as Andrew Croxford, who we met in The Cost of Liberty, and Christina Pagel, member of Independent SAGE, whose social media output has been one of my primary launch points in all my COVID-related research. Again, once you start to employ these techniques in assessing research, you start to develop a really good sense of what's what, and where Humian confidence should be properly apportioned‡.Refs:

[1] Implicit bias in healthcare professionals: a systematic review - Fitzgerald and Hurst - NIH 2017

[2] Racial bias in pain assessment and treatment recommendations, and false beliefs about biological differences between blacks and whites - Hoffman et al - NIH 2016

[3] Taking Black Pain Seriously - Akinlade - NEJM 2020

[4] Comparing SARS-CoV-2 natural immunity to vaccine-induced immunity: reinfections versus breakthrough infections - Gazit et al 2021.

Further reading:

Qualified Immunity - SARS-CoV-2 and expertise.

Very Able - Why expertise is fundamentally about understanding variables.

Where do You Draw the Line? - Why expertise matters.

The Map is Not the Terrain - The pitfalls of natural language.

* A pre-print is a paper made available prior to undergoing peer-review. Peer-review is a process critical to ensuring good quality science in publications. It involves experts in the relevant field searching the paper, checking the maths, looking at methodology and design, essentially working very hard to find anything wrong with the paper. See links in further reading for more on peer-review.

** A confounding variable is any variable with the potential to skew the results.

† The Impact Factor list provided here is from 2019, as the 2020 list is only available as a pdf at this time.

†† There's a famous and quite probably apocryphal story about biologist J.B.S. Haldane in discussion with a group of theologians. When asked if his studies had revealed anything interesting about the nature of the creator, Haldane is said to have replied he appeared to have an inordinate fondness for beetles. Whether this story is true or not, Haldane did make similar comments in his book What is Life, published in 1949: "The Creator would appear as endowed with a passion for stars, on the one hand, and for beetles on the other, for the simple reason that there are nearly 300,000 species of beetle known, and perhaps more, as compared with somewhat less than 9,000 species of birds and a little over 10,000 species of mammals. Beetles are actually more numerous than the species of any other insect order. That kind of thing is characteristic of nature."

‡ Hume tells us we should apportion our confidence to the evidence available and the degree of consilience between our models and the data.

No comments:

Post a Comment

Note: only a member of this blog may post a comment.